How to Get Started with AWS Fargate: A Complete Guide Tutorial and Next Steps

Introduction

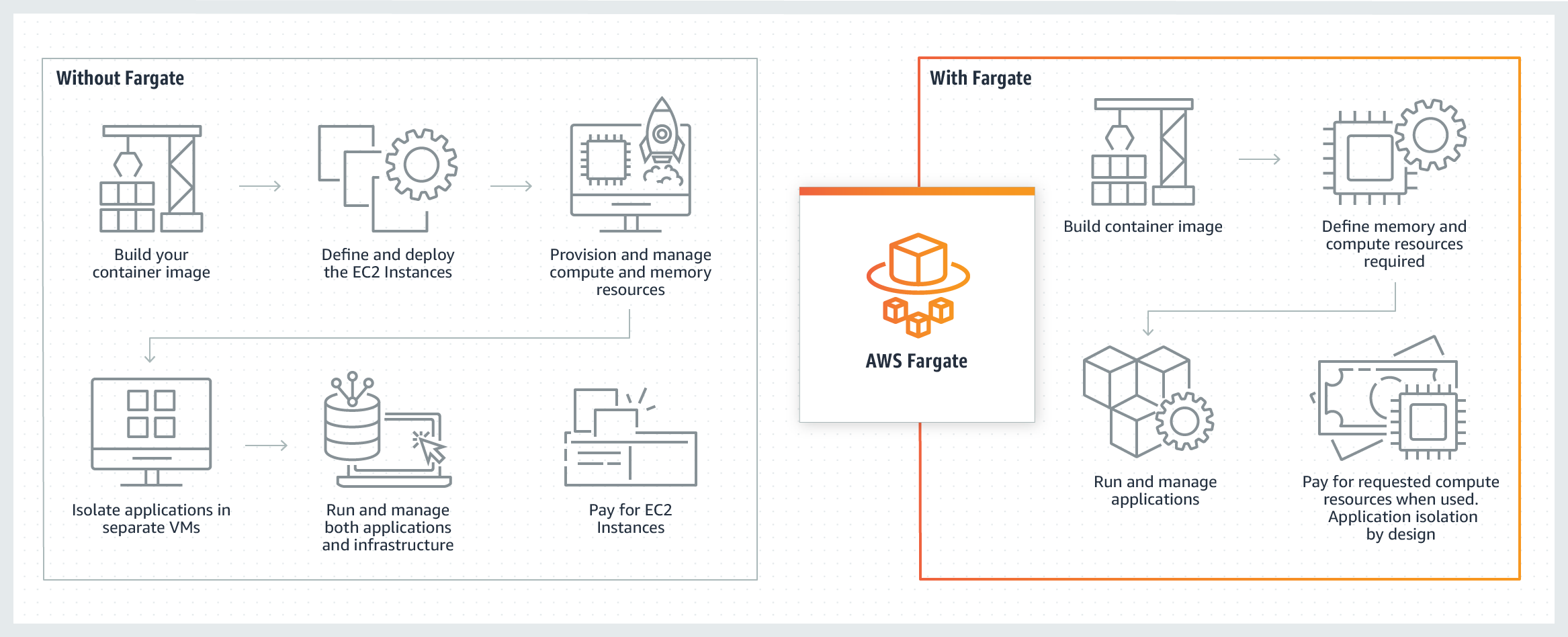

AWS Fargate is a serverless compute engine for running containers in the Amazon Web Services public cloud. As we discuss in our AWS Fargate overview, Fargate has a number of advantages to recommend it, including making your IT environment less complex and integrating with other AWS tools and services.

Given these benefits, then, how can you get started with AWS Fargate in the first place? In this article, we’ll discuss setting up AWS Fargate and some basics of using the AWS Fargate platform.

Table of Contents

Achieve Cloud Elasticity with Iron

Get up and running with IronWorker’s serverless compute engine today by starting your 14-day free trial.

Setting Up AWS Fargate

AWS Fargate has a bit of a learning curve, but there are a number of AWS Fargate tutorials (e.g. “Monolith to Microservices with Docker and AWS Fargate”) to help you get started. If you prefer to leap right in, then follow the steps in this section.

To start using AWS Fargate, you should be familiar with terminology such as “containers” and “tasks”:

- Containers are packages of source code together with the required libraries, frameworks, dependencies, and configurations. This helps applications run predictably and reliably, no matter the environment.

- Tasks are “blueprints” that define which containers should run together in order to form a software application.

- Clusters are logical groupings of one or more tasks.

Read through the first-run experience for AWS Fargate, which walks you through the process of creating Fargate containers, tasks, and clusters. Under the heading “Container definition,” select one of the default images (e.g. “sample-app”) and review the task definition. Click “Next” at the bottom of the page.

Now that you’ve determined the container and task, you need to define your service, which sets the number of simultaneous instances of a task definition within a single cluster. If desired, you can select the “Application Load Balancer” option, which helps redirect traffic during times of heavy demand (although it’s not necessary for this AWS Fargate tutorial).

Click “Next” to start configuring your cluster. In this Fargate tutorial, the only option you can change is the name of the cluster (which is already set to “default”). Finally, click “Next” to review your settings, and click “Create” to launch the service.

Once it launches, click on “View service” to view the cluster in your Amazon ECS dashboard. From here, you can click on “Update” to modify the cluster settings, or “Delete” to finish the AWS Fargate tutorial and start using the service for real serverless workloads.

Migrating to AWS Fargate

You may already have been using containers elsewhere on AWS (e.g. Amazon ECS or EKS)—but now you want to get started enjoying AWS Fargate’s serverless capabilities. The good news is that migrating to AWS Fargate isn’t too difficult.

Fargate is intended to work with a container orchestration service such as Amazon ECS (Elastic Container Service) or Amazon EKS (Elastic Kubernetes Service). As a nice result of this compatibility, you can use Fargate with the ECS console, since both Fargate and ECS use the same API actions.

Amazon provides a tutorial for migrating your Amazon ECS containers to AWS Fargate. The basic steps of this migration are as follows:

- In your task definition parameters, the setting “network mode” needs to be changed from bridge, which is the default for ECS, to awsvpc. This mode gives each of your Fargate tasks individual elastic network interfaces and IP addresses so that multiple applications can use the same port number.

- As a consequence of using awsvpc, AWS Fargate users need an Identity and Access Management (IAM) role called AWSServiceRoleForECS.

- Task-level resources need a defined amount of CPU (specified either in number or in vCPUs) and memory (specified in gigabytes or megabytes).

These are just some of the most important steps to perform an AWS Fargate migration; for the full list of possible changes, check out Amazon’s guide “Migrating Your Amazon ECS Containers to AWS Fargate.” You can also look at the Task Definitions for Amazon ECS GitHub repository, which contains multiple example task definitions for AWS Fargate.

Iron.io Serverless Tools

Speak to us to learn how IronWorker and IronMQ are essential products for your application to become cloud elastic.

AWS Fargate Next Steps

Once you’ve set up AWS Fargate or migrated your existing containers, there are still other things you need to learn to use Fargate effectively.

First, if you need high performance from your Fargate containers, you should set up automatic scaling, which automatically increases or decreases the number of containers in a cluster based on the level of demand. There are multiple options to choose from for automatic scaling in Fargate:

- Target tracking scaling adjusts the number of containers based on the value of a specified metric or KPI.

- Step scaling adjusts the number of containers when an alarm is triggered in Amazon CloudWatch.

- Scheduled scaling adjusts the number of containers based on the current date and/or time.

Second, you should review your Fargate container security policies to ensure that your containers are protected from unauthorized access. This includes:

- Start with a container base image that has a known source and publisher.

- Only trust public container registries that are official or run by the vendor.

- Make use of data encryption and user authentication and authorization techniques.

For a complete overview of how to handle Fargate container security, you can watch the AWS online tech talk “Securing Container Workloads on AWS Fargate.”

AWS Fargate Alternatives: Iron.io

As you can see, there’s a lot of work involved when getting started with AWS Fargate—and we’ve only just scratched the surface. Unfortunately, a steep learning curve is one of the more common drawbacks when looking at AWS Fargate reviews.

If you’re looking for a serverless compute engine with a much gentler learning curve, give IronWorker a try. IronWorker is a serverless solution for background job processing that has been built from the ground up for high performance and concurrency. Even better, IronWorker can run in whatever IT environment best fits your needs: in the public cloud, on-premises, on a dedicated server, or using a hybrid model with both on-premises and the cloud. This helps you avoid vendor lock-in problems that are a frequent complaint among AWS customers.

Unlock the Cloud with Iron.io

Find out why some of the world’s most popular websites rely on IronWorker for high performance and availability. Get in touch with our team today for a chat about your needs and objectives, and a free trial of IronWorker.